Lately my feed is full of posts claiming that TOON cuts LLM token usage by 30–60% and that JSON is basically “legacy bloat”. As someone who spends her days helping customers bring AI into production at Oracle, I was curious… but also a bit skeptical.

So instead of arguing in the comments, I opened a notebook and did a tiny, practical experiment:

If I take a realistic JSON payload and encode it in different ways,

how much do I actually save in tokens?

Short version:

- TOON-like formats really can be dramatically more compact than pretty JSON.

- But once you minify JSON and stop abusing whitespace, the gap shrinks a lot.

- And in production, the biggest savings usually don’t come from switching formats.

Let’s walk through what I did and what I found.

Why we suddenly care so much about formats

Every token you send to a Large Language Model:

- Costs money 💸

- Consumes context window 🧠

- Adds latency ⏱️

So it’s natural that people are excited about formats that reduce tokens without losing information.

JSON was never designed for LLMs — it’s human-friendly, verbose, and repeats field names constantly:

[

{

"id": 1,

"name": "User 1",

"country": "DE",

"purchases": 8,

"segment": "standard"

}

]

TOON, on the other hand, is designed with LLM prompts in mind: more tabular, less punctuation, fewer repeated keys. Conceptually, the same data might look like:

users[3]{id,name,country,purchases,segment}:

1,User 1,DE,8,standard

2,User 2,FR,38,churn_risk

3,User 3,IT,27,new

You instantly see where the token savings come from:

- Keys listed once.

- Less structural punctuation.

- Rows are just values.

The question is not “Is TOON more compact?” — that’s obvious.

The real question is:

“How much does TOON help beyond just using JSON properly?”

A tiny experiment: JSON vs minified JSON vs TOON-like

To get a feeling for this, I ran a small experiment in a notebook:

- I generated fake user data:

id, name, country, purchases, segment

2. Then I encoded the same data in three formats:

- JSON pretty — human-friendly, with indentation and newlines.

- JSON minified — no extra spaces or newlines.

- TOON-like format — a simple tabular structure:

users[N]{cols}:

value1,value2,...

(Not a full TOON implementation, but close enough for measuring token trends.)

3. For each format I measured:

- Token count (using the

cl100k_basetokenizer). - Character count (just to sanity-check).

4. Then I repeated this for different dataset sizes: 10, 100, 1,000, and 5,000 users.

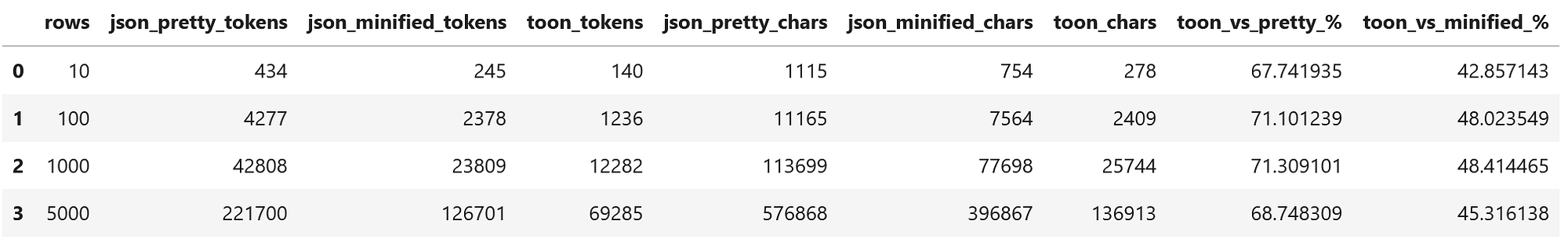

The numbers

Here are the results of the experiment (token counts per format and relative savings of TOON-like):

A few observations:

TOON-like vs pretty JSON

- Huge savings: around 69–71% fewer tokens.

- This matches the kind of numbers people like to show in slides and tweets.

TOON-like vs minified JSON

Still very solid: around 45–48% fewer tokens.

But now we’re in a very different conversation:

- Pretty JSON → TOON-like: wow, massive improvement.

- Minified JSON → TOON-like: still good, but no longer the night-and-day story.

Minifying JSON alone already saves a lot:

- In the 5,000-row case:

- Pretty JSON: 221,700 tokens

- Minified JSON: 126,701 tokens

→ Just removing whitespace cut about 43% of tokens with zero change in format semantics.

So yes, TOON-like formatting is very efficient — especially compared to JSON as most people send it today (pretty-printed). But if you take 5 minutes to treat JSON as a machine format instead of a UI, the remaining benefits of switching format are smaller than the hype suggests.

What these numbers really tell us

From this tiny experiment, my takeaways are:

- If you send pretty-printed JSON to an LLM, you’re burning tokens for no good reason.

2. TOON (or TOON-like formats) can be a strong optimization for:

- Large, uniform, structured datasets (logs, metrics, user tables, product catalogs…).

- High-volume pipelines where every token matters.

3. But a big chunk of the “TOON vs JSON” story is actually:

- “TOON vs badly-used JSON”.

Once JSON is:

- minified,

- cleaned up,

- and reasonably designed,

the incremental savings of moving to another format are still valuable… but no longer magical.

Where TOON shines — and where JSON is still just fine

Based on both the experiment and real-world projects, I’d say:

TOON (or TOON-like formats) shine when:

1.Your data is tabular and homogeneous:

- Many rows, same columns.

- Events, logs, transactions, product lists, user profiles.

2. You send large chunks of structured data into the model:

- “Analyze this customer table.”

- “Find patterns in these logs.”

- “Generate recommendations over this catalog.”

In those cases, the ability to declare columns once and stream rows is a perfect fit.

JSON still makes a lot of sense when:

1.Your data is deeply nested or irregular:

- Lots of optional fields.

- Complex hierarchies.

2. You need tight integration with existing systems:

- APIs, databases, microservices, logging infrastructure…

- All of them already speak JSON.

3. You’re doing lightweight prompts where the payload isn’t the main cost driver.

And in many architectures, you’ll probably end up with both:

- JSON as the standard format of your application.

- A TOON-like (or other compact representation) as a prompt-time optimization layer before calling the model.

Bigger levers than JSON vs TOON

This is the part where my day job kicks in 😅

Working with customers on GenAI solutions, the biggest cost problems almost never start with the data format.

The huge savings usually come from:

Sending less data:

- Don’t ship the entire customer history every time.

- Use retrieval / filtering / vector search to narrow context.

Cleaning prompts:

- Remove repeated instructions.

- Avoid boilerplate walls of text.

Choosing the right model:

- A smaller, cheaper model that’s good enough beats an overkill model 99% of the time.

Caching and reuse:

- Cache responses when inputs don’t change.

- Split prompts so parts can be reused.

Format is a second-order optimization. It absolutely matters at scale — and TOON is a smart idea — but if your prompts are noisy, the format won’t save you.

So… is TOON worth it?

My honest answer (today):

Yes, TOON is a good tool to have in your toolbox.

No, JSON is not “dead” or obsolete because of it.

And if you’re starting to optimize LLM costs, I would:

- Minify and clean up your JSON.

- Remove irrelevant data from prompts.

- Try smaller models and add caching.

- Then, for heavy structured payloads, experiment with TOON.

I’m not anti-TOON and I’m not blindly pro-JSON.

I’m pro measuring.

Over to you

I’d love to hear from people running real systems:

- Have you tried TOON (or similar formats) in production, not just in benchmarks?

- Where did you see the biggest impact: format, prompt design, model choice, or architecture?

- Would you rather add a new format to your stack or first fix how you’re using JSON?

Try it yourself & stay in touch 🙂

If you want to play with the numbers yourself, I’ve published the notebook I used for this experiment on my GitHub. It’s a small, self-contained notebook that you can easily adapt to your own datasets, tweak the formats, or plug in the tokenizer/model you’re actually using in production.

If you’re interested in more hands-on experiments around LLMs, data formats, and how all this fits into real architectures, you can follow my work here:

- LinkedIn — where I share day-to-day thoughts, customer stories, and demos.

- Medium — for deeper technical write-ups and step-by-step walkthroughs (including this one).

- My website — cristinavaras.com where I’m starting to centralize my latest talks, articles, demos, and experiments.

If you run your own JSON vs TOON tests (or get completely different results!), I’d genuinely love to see them. Tag me or reach out — this space moves fast, and we all learn much faster when we share what actually works, beyond the hype.

Happy coding! 🙂